Termux introduction

Let me start by saying "Termux is Cool". Termux is the application I have long wanted. Of course, Termux is cool only if you have something to useit fore, but Termux in combination with a USB keyboard can turn a mobile phone in to a fully functional workstation. That however, requires that you can use a Linux command line to perform the work.

So what is Termux?

The people behind Termux describe it like this:

"Termux is an Android terminal emulator and Linux environment app that works directly with no rooting or setup required. A minimal base system is installed automatically - additional packages are available using the APT package manager."

Termux takes advantage of the fact that Android is already built on a Linux kernel. Termux complements this Linux kernel with a lot of tools and an interface that allows you to have a well-equipped linux environment (CLI) on your mobile phone / tablet.

You have long been able to run a full Linux on an Android device, but it was never straight forward. With Termux it becomes easier and more integrated. You do not have to be root to run Termux.

Termux offers solutions to some problems that I have previously had difficulty finding satisfactory solutions to.

Here I will review some of the benefits I experience at Termux.

1. View image and video on the console:

I am an artist and I often work with video and pictures and I often use command line (CLI) based programs in my work. (Netpbm, mplayer, ffmpeg)

One of the things I need is to start a video and stop it again without having to take my finger off the keyboard. This is because I do some video editing with ffmpeg from a Linux terminal. In a common Linux environment with X11, one can easily start a video from a Terminal without taking your fingers off the keyboard, but in Android it has always been pretzel. In Termux, this is possible both to display and images and video in the terminal window. Not good quality pictures, but a low resolution version that can be used to quickly check what it is for a picture or to watch a video clip to cut or similar. This can be done with the media player MPV which is part of the Termux packages reposatory. (mpv --no-config --pause --vo = tct https://stallman.org/rms.jpg and mpv --no-config --vo = tct fc4.mp4)

2. Take advantage of the SD card

As long as I have been working on this, it has been difficult to write to the SD card on the phone. That is, the extra space could not be exploited and I had to have an entire Linux system as well as all my files on the phone's very limited internal memory. With Termux the user has a folder in the phone's internal memory and a folder on the SD card that you can write to and read from. It makes it possible to use the SD card as its normal working drive and have all folders and files there so that they do not take up space in the phone's memory and so that there is much more storage space avalable.

Before you can access the various drives, you must execute the command

termux-setup-storage

3. Playing sound

As said when you like to work with multimedia from the command line, it is nice to be able to play an audio file and hear it. It has been difficult in the various Linux solutions I have used before. With Termux this works like a charm. There are to two commands to play sound i Termux. One is the media player MPV that I have just mentioned. The other is a dedicated audio player called play-audio.

4. Super good integration with the Android system

It all works seamlessly. You do not have to set up language separately, everything seems to be closely integrated with the rest of the system, which makes the system quick and easy to access. Termux can be installed as a common app from the play store and it does not even take up a third of what it takes to install a full Linux distribution that I have done before.

Last but not least.

5. Community

Termux has a community. You can find conversations, WIKI pages and tutorials on most aspects of Termux. This means everything when you need to get to know a system and to costumize it..

It was the five significant advantages that make me right now very excited about Termux over other Linux-on-Android solutions. Now I will briefly introduce myself and my approach to the area. Then I would like to thank the developers behind Termux and then I will start by showing how Termux can be set up and adapted to a well-functioning Linux environment on your mobile phone.

I am a Linux enthusiast since I first heard about Linux which was in 1999. Since then, I've been trying to install Linux in many variants on every possible PC. When Android came I started experimenting with creating a Linux environment on Android and by doing this I built myself a solid practical experience base. For a period I was more inclined towards the Raspberry Pi and I experimented with building a small, energy-efficient workstation.

In the transition to 2019 I put aside the Raspberry Pi and tried again to look at the possibilities of running Linux in a terminal on Android and it was here I came on the track of Termux which I had not heard of before.

Well it was a little about me. Now I would like to give a big, warm thanks to Fredrik Fornwall and the whole group behind Termux. In fact, I also think there must be a thought for Kevin Boone who developed KBOX Linux on Android long before Termux was there. Kevin Boone carries out the idea of copying a Linux system on top of existing Android / Linux system that already exists on Android.

Configuration and customization

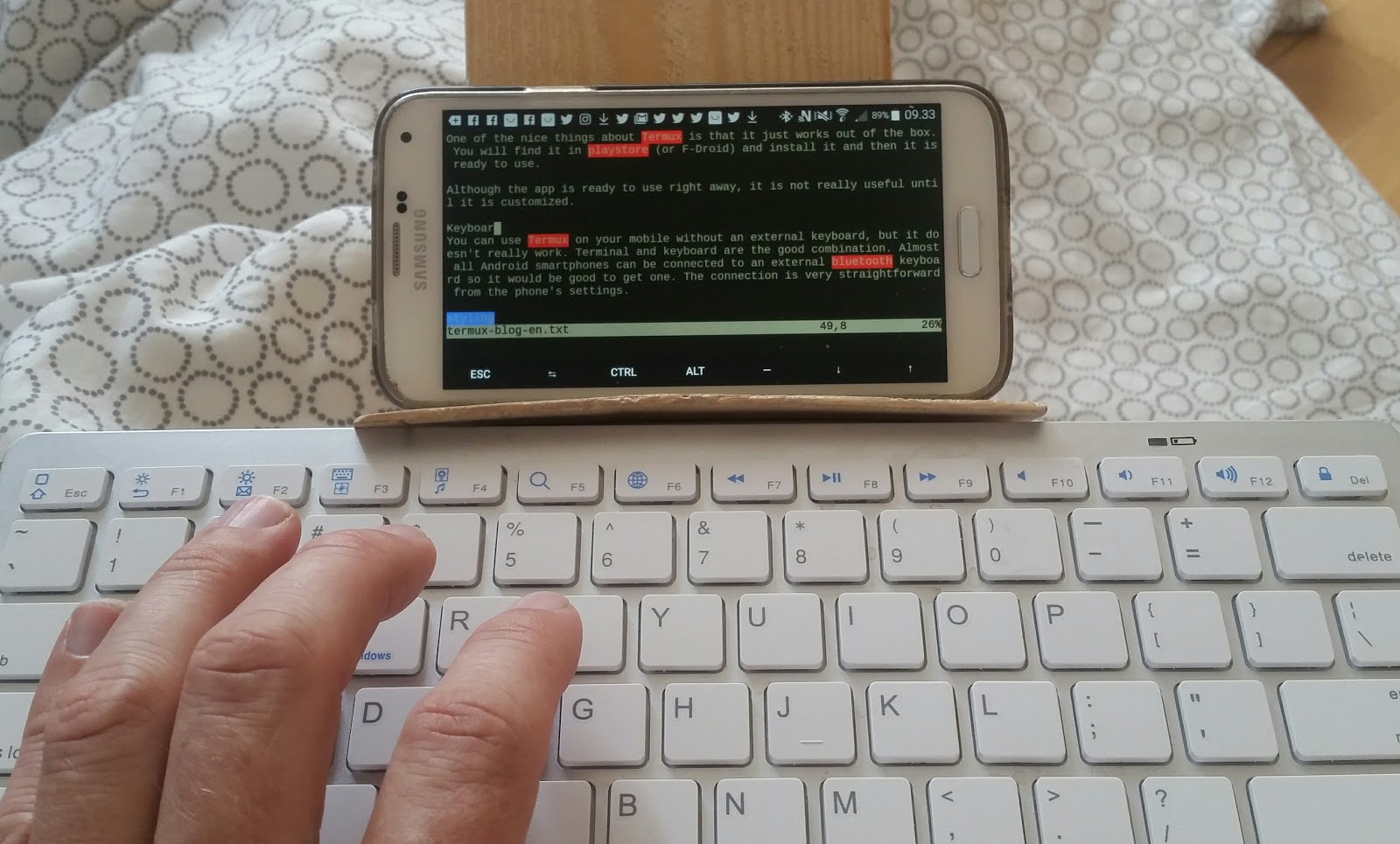

One of the nice things about Termux is that it just works out of the box. You will find it in playstore (or F-Droid) and install it and then it is ready to use.

Although the app is ready to use right away, it is not really useful until it is customized.

Keyboard

You can use Termux on your Android device without an external keyboard, but it doesn't really work. Terminal and keyboard are the good combination. Almost all Android smartphones can be connected to an external bluetooth keyboard so it would be good to get one. The connection is very straightforward from the phone's settings.

Styling

Black white terminal is just a little monotonous and uninspiring to look at, especially if you have to spend hours working in it every day. I've got a dark green background and a light green font. It's nice and comfortable to look at ... Well but how to get some colors.

You need to play-store (or F-Droid) and install Termux: Style it costs 3-4 dollars. Once installed, you can start the Termux and long-press the screen

more -> style

Then you get a lot of color options to choose from and a lot of fonts and then you can choose the one you like best.

Applications

First it is good to have the environment updated so run the command

pkg update

If you want to install some programs then you can do it with

pkg install

so if you want to install vim which is a super good text editor (which, however, takes some time to learn to use) then just enter

pkg install vim (enter)

Files and Folders

Basically, Termux provides two folders where you can put your own files and create sub-folders. The one is on the phone's internal storage and the other is on the phone's SD card.

The first is:

/data/data/com.termux/files/home

It's your home directory

The other is:

/data/data/com.termux/files/home/storage/external-1

And that's your folder on the SD card

Well, before you can get this to work, run the command:

termux-setup-storage

and then say "yes" to Termux having to access the various storage areas on the unit ..

System path and Bash

Now we have to look at some setup of the local user environment in the system. We need to add a new folder to the system path.

What is the system path? The system path is a list of folders that the system looks for executable files in. If you execute a text command such as "ls" then the system checks whether there is an executable file with that name in one of the folders in the system path.

You can add a folder to the system path. I need to add a folder to my own scripts so I can call a script through its name from anywhere in the system.

If we are to see the system path then we can do that by typing the command:

echo $ PATH

Here is the output from the command before I add a new folder.

$ echo $ PATH

/data/data/com.termux/files/usr/bin:/data/data/com.termux/files/usr/bin/applets:/data/data/com.termux/files/home

I want to add the new folder using a setup file called

.bashrc

As you can see, the file has a dot to begin with. Files starting with a dot are hidden files in Linux. That is, you cannot see them with a regular "ls" command. But you can see them by adding a flag to the "ls" command.

ls -a

displays hidden files.

there is no .bashrc file in Termux to start with so you have to create it yourself in your home directory which is what you start in when you open Termux (/data/data/com.termux/files/home)

first I just create the folder I want to use for my scripts

mkdir inpath

Then the "inpath" folder is created

Now I need it added to the system path so I can use my scripts from anywhere in the system. Therefore, I now create a file called ".bashrc" in my home directory.

I use my favorit editor "vim", but you can also use "nano" and others. I am typing:

vim .bashrc

then vim opens with an empty file and you must now insert / enter a few commands in the file. These commands will be executed each time you open Termux.

Then insert the following text into the file. (Termux supports copy and paste, just long press screen and select paste)

---------------------------

---------------------------

export PATH = $ PATH: /data/data/com.termux/files/home/inpath;

if [-f ~ / .bash_aliases]; just

. ~ / .Bash_aliases

fi

---------------------------

---------------------------

Once you have entered / inserted these lines, save the file.

It is the first line, ie the one that starts with "export" which makes sure to add my own folder to the system path. The next 3 lines are about something else. They are about loading a file with some aliases. What aliases are and how they work you can check out yourself on the web. Right now I just want to say that we need an alias for our navigation in the system. We would like to be able to quickly get back to our home directory no matter where we are in the file system. For that we must use an alias. But first we need to create a new file called ".bash_aliases"

---------------------------

---------------------------

alias home = 'cd /data/data/com.termux/files/home'

# Add your own aliases gradually

---------------------------

---------------------------

Once you have made the file you can save it and then you can close Termux by typing "exit" and then press enter x 2. Then you can reopen Termux and now you should be able to return to your home folder from any place in the file system by typing

home (enter)

And the inpath folder has now become part of the system path so you can execute the scripts in the folder from anywhere in the file system. However, there is a package you need to install before you can make your shell script work properly.

pkg install termux-exec

Once you have installed the thermux exec, your shell scripts should work fine.

It was all for this time. I hope I get time to return with more Termux tutorial.